Your Brain Detects Fake Faces in 170 Milliseconds. Here's Why That Kills Your Ads

EEG research proves your brain detects AI-generated faces before you're conscious of it. Learn why this subconscious reaction is destroying your ad hook rates.

A research team at the University of Sydney showed 50 faces to a group of participants and asked them to identify which ones were real and which were AI-generated. The participants got it right 37% of the time, which is worse than flipping a coin. They couldn't tell.

But their brains could.

When the researchers monitored neural activity using EEG (electroencephalography), the participants' brains correctly identified the fakes 54% of the time. The gap between conscious identification and subconscious neural response was statistically significant. Your brain knows something is off about an AI face even when you, the person looking at it, have no idea.

For anyone spending money on video ads, this finding changes the game. It means the question isn't whether your audience can "tell" your b-roll is AI-generated. It's whether their brain can. And at 170 milliseconds, it has already decided before their thumb stops scrolling.

Photo by Look Studio on Unsplash

Real human expression. Your brain processes it differently than AI-generated alternatives.

Photo by Look Studio on Unsplash

Real human expression. Your brain processes it differently than AI-generated alternatives.

The 170-Millisecond Signal

The EEG data from the University of Sydney study pinpointed exactly when the brain's response diverges between real and synthetic faces. According to the researchers, brain activity changed depending on whether participants were looking at actual or artificial faces, and the difference became noticeable approximately 170 milliseconds after the faces appeared on screen.

That 170ms marker isn't arbitrary. It corresponds to a well-documented brain signal called the N170, a component of the electrical response that is specifically sensitive to the arrangement and spacing of facial features. Your brain has dedicated neural hardware for reading faces. It has been optimized by millions of years of evolution. And it fires before conscious thought even enters the picture.

A separate study published in Nature's Scientific Reports confirmed this finding with a different methodology. Researchers used steady-state visual evoked potentials (SSVEP) to measure how the brain responds to faces at different levels of realism, from simple cartoons to photographs. They found a non-linear, U-shaped relationship: the most abstract images and the most realistic photographs both produced strong neural responses. But images in the middle, the "almost real but not quite" zone, produced a distinctly different pattern.

The critical takeaway: EEG decoding differences between AI-generated and real human faces were present even when users did not consciously report those differences.

What Happens in Those 170 Milliseconds

To understand why this matters for advertising, you need to understand what the brain is actually doing in that fraction of a second.

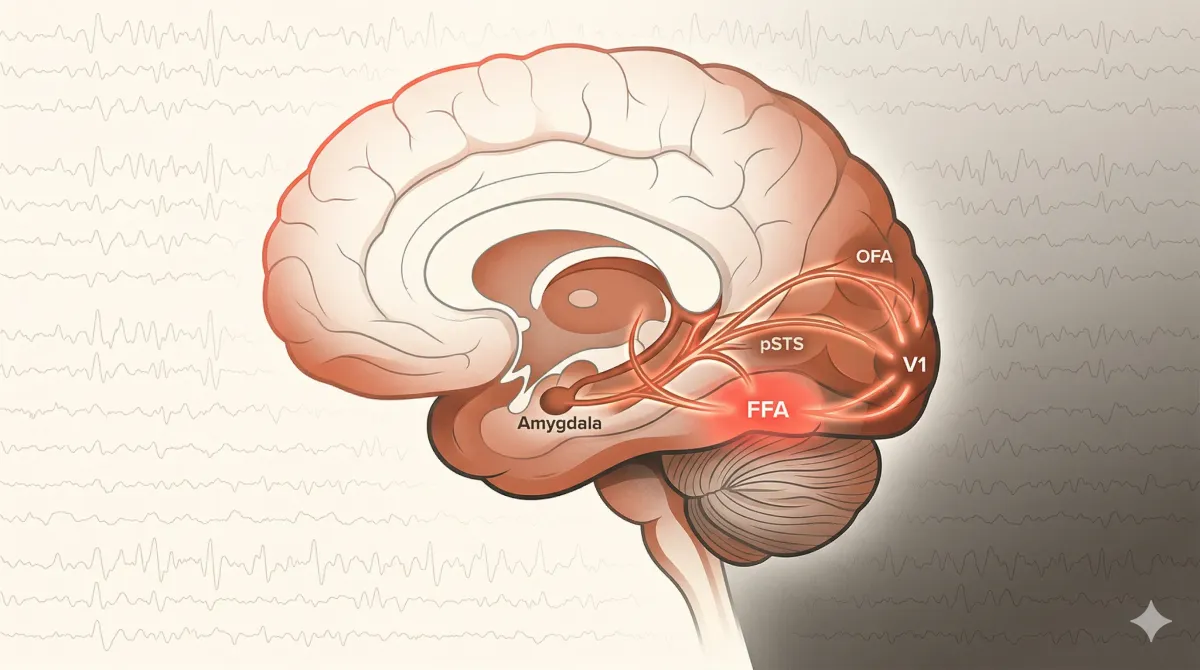

The fusiform face area (FFA) in the ventral occipito-temporal cortex is a region that responds with high selectivity to faces. When you see a face, the FFA activates and begins processing configural information: the spacing between the eyes, the proportions of the nose relative to the mouth, the symmetry (or lack of symmetry) of the overall structure.

This processing is automatic. You don't choose to do it. You can't turn it off. And it's exquisitely sensitive to deviation from what a real human face looks like.

Researchers MacDorman and Diel found strong support for a theory called configural processing, the idea that uncanny valley reactions are caused by our sensitivity to the positioning and size of human facial features. A related theory, perceptual mismatch, explains the discomfort we feel when one feature looks realistic but another doesn't. Think: realistic eyes paired with subtly wrong skin texture. This specific incongruity is a common artifact in AI-generated imagery.

The brain's face-processing system activates within milliseconds, long before conscious awareness.

The brain's face-processing system activates within milliseconds, long before conscious awareness.

From an evolutionary standpoint, this sensitivity makes sense. Our ancestors needed to quickly assess whether a face was healthy, trustworthy, or threatening. Small deviations from normal facial features and movements increase eeriness, according to research by Seyama and Nagayama. Even minor imperfections in human-like characters trigger discomfort. This is not a modern quirk. It is a survival mechanism.

The Scroll Behavior Connection

Now connect this neuroscience to what happens in a TikTok or Instagram feed.

Research from the Digital Consumer Behaviour Report shows that viewers take roughly 1.5 seconds to decide if content is worth their time. In that 1.5 seconds, your brain has already completed multiple cycles of that 170ms face-processing loop. It has already formed an implicit assessment of whether the face on screen is real.

If the assessment comes back "something's off," the result is not a conscious thought like "that looks AI-generated." It's a feeling. A slight unease. A lack of connection. And that feeling translates directly into a behavioral outcome: the thumb keeps moving.

The data supports this. In a six-brand analysis, SendShort found that creatives with human presenters and native overlays outperformed polished, brand-heavy versions on hook rate by 5 to 10 points. The brain responds more strongly to real human faces, and that response translates into measurably longer attention.

Facebook's own data confirms the downstream effect: nearly half of viewers who stay for three seconds will watch for thirty. That means if your opening face triggers even a subtle uncanny response, you're losing not just the hook but the entire view.

"But Nobody Can Tell It's AI"

This is the objection you'll hear from every creative team excited about AI video tools. And at a conscious level, they're partly right.

Runway's own "Turing Reel" study, published in early 2026, found that over 90% of participants could not reliably distinguish their Gen-4.5 outputs from real video. Overall detection accuracy was just 57.1%, barely above chance.

But dig into the data. Human-related videos (faces, hands, actions) were significantly easier to detect, with accuracy ranging from 58% to 65%. Animals and architecture actually fell below chance (45-47%), meaning participants were more likely to mistake AI-generated footage for real. The detection gap is specifically concentrated in human content. The exact type of content that matters most for ad hooks.

And remember the University of Sydney finding: conscious detection and subconscious neural response are two different things. Your audience might not be able to tell you why they scrolled past your ad. But their N170 component already made the call.

For a deeper analysis of the Runway study's implications, see our breakdown in The 90% Problem: Runway's Own Study Shows Most Can't Tell, But Your Scroll Metrics Can.

1.5 seconds to decide. Your brain has already processed the face multiple times in that window.

1.5 seconds to decide. Your brain has already processed the face multiple times in that window.

The Trust Penalty Compounds the Problem

The subconscious detection issue doesn't exist in isolation. It stacks on top of a growing conscious distrust of AI content.

According to Animoto's 2026 State of Video Report, 83% of U.S. consumers believe they can identify AI-generated videos. Among those who have watched a video they suspected was AI-generated, 36% say it lowered their trust in the brand behind it.

The top telltales consumers report: robotic gestures (67%), unnatural voices (55%), and lack of emotional tone (51%). That last one is critical. Emotional tone is exactly what reaction clips and b-roll hooks need to convey in order to stop the scroll.

So you're facing a dual problem. At the subconscious level, the brain's face-processing system flags the content as not-quite-right within 170ms. At the conscious level, a growing percentage of your audience is actively watching for signs of AI. Together, these create what researchers call a "trust penalty," a measurable reduction in brand perception that occurs when audiences detect or suspect AI involvement. This is the core reason a video marketplace built around authentic content — real faces, real emotion, sourced from real creators — exists as a category distinct from stock footage or AI generation.

The Nuremberg Institute for Market Decisions documented this in a 2025 study: simply labeling an ad as AI-generated makes people see it as less natural and less useful, which lowers ad attitudes and willingness to research or purchase. You don't even need to get caught. The suspicion alone is enough.

For more on the trust penalty, read The Trust Penalty: What Happens When Viewers Suspect Your Video Is AI.

What This Means for Your Ad Creative

The practical implications are straightforward.

Your opening frame matters more than anything. If your first 170 milliseconds feature an AI-generated face, you've already lost a measurable percentage of your audience before they're even aware of what they're watching. Use a real human face with a real expression. One face, not a group. Close-up, not wide shot. The brain responds to individual faces more strongly.

Hook rate is downstream of neural processing. The 5-to-10-point hook rate advantage that real human presenters enjoy over polished brand content isn't a style preference. It's a biological response. Your brain engages more deeply with authentic faces, which translates to longer view times, which signals quality to the platform algorithm, which lowers your CPM.

Emotion must be genuine. The lack of emotional tone was cited by 51% of consumers as an AI telltale. Real human micro-expressions, the tiny movements around the eyes and mouth that signal genuine feeling, are precisely what AI struggles to replicate and precisely what the brain's face-processing system is tuned to detect.

Testing with real clips is now a strategic advantage. If your competitors are scaling AI-generated b-roll while you're testing authentic human reaction clips, you have a biological edge they cannot close with better prompts or newer models. The N170 doesn't care how good the AI gets at fooling conscious observers. It processes configural information at a level below awareness. Platforms like LatinaUGC give brands access to a clip library of genuine reaction videos from Latin creators — with lifetime commercial rights — so testing at scale doesn't require booking individual shoots.

For tactical guidance on building scroll-stopping hooks with real faces, see The 1.5-Second Window: How Real Human Emotion Stops the Scroll.

The Argument in Brief

The chain of evidence runs like this:

Your brain processes faces through dedicated neural hardware that activates at 170 milliseconds. This processing detects differences between real and AI-generated faces even when you cannot consciously identify them. In a scroll environment, this subconscious detection produces a subtle disengagement response that manifests as lower hook rates, shorter view times, and higher scroll-through rates. Layered on top of this, conscious consumer distrust of AI content is rising, with 36% reporting reduced brand trust when they suspect AI involvement.

Real human faces with genuine emotional expression bypass both problems. They trigger the full depth of the brain's social processing system. They build rather than erode trust. And they deliver measurably better performance on every metric that matters to a media buyer.

The neuroscience isn't theoretical. The EEG data is published. The performance benchmarks are documented. The question is whether your ad creative is built on a foundation that works with human biology or against it.

Real creators. Real emotion. Ready to test in your next campaign. Browse the Library →

Sources

- University of Sydney, EEG deepfake detection study, reported in The Hill and Science Times, 2022

- Nature Scientific Reports, "Realness of face images can be decoded from non-linear modulation of EEG responses," 2024

- Animoto, "State of Video 2026 Report," January 2026

- Nuremberg Institute for Market Decisions, "Consumer attitudes toward AI-generated marketing content," 2025

- Runway Research, "The Turing Reel," January 2026

- SendShort, six-brand hook rate analysis, cited in Billo, 2025

- MacDorman & Diel, configural processing and uncanny valley research

- Seyama & Nagayama, facial proportions and eeriness study

- UCSD / Ayse Pinar Saygin, fMRI mismatch study

- Digital Consumer Behaviour Report, attention span data, 2025